MHC-Coach message generation

A fine-tuning approach was selected for MHC-Coach as it supports low-latency, single-turn interactions without the runtime overhead of multi-step prompting, making it well-suited for mobile-health deployment.

Data

Our fine-tuning dataset consists of 3,268 motivational messages grounded in the TTM framework, designed to promote exercise behavior change across the five stages of change. These messages were collected through a separate study conducted in 201733. In that study, 377 messages were authored by 25 human experts, including fitness coaches, behavioral coaches, and researchers in health psychology who were recruited through direct outreach. The experts were selected based on their applied or academic experience in exercise promotion and behavior change. The remaining messages were written by a larger group of peer contributors recruited via Amazon Mechanical Turk. Although not explicitly trained in the TTM for the study, they were presented with scenarios of individuals in different stages of behavioral change and instructed to create messages that they believed would be most motivating for each. As such, they were free to draw on any behavioral strategy or approach from their professional experience, not limited to the TTM alone71.

In addition to these human-designed messages, we incorporated content from ten manuscripts that were chosen to capture the seminal Transtheoretical-Model theory, reflect consensus guideline statements on exercise and cardiovascular risk, and provide diverse empirical perspectives, ranging from longitudinal cohort data to global surveillance and equity analyses on physical-activity measurement and outcomes. We limited the set to ten manuscripts1,10,46,47,48,49,50,51,53,54 to maintain a focused, coherent corpus, a common practice in domain-specific fine-tuning that helps the model internalize key constructs without diluting them72. These manuscripts thus enriched the training corpus with evidence-based insights linking physical activity, behavior change, and cardiovascular outcomes.

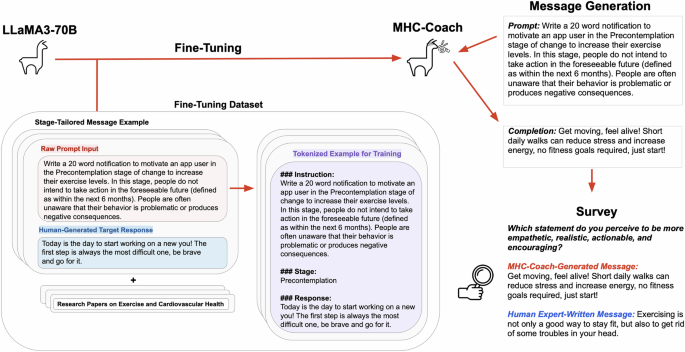

The combined fine-tuning dataset, consisting of human-written messages, content extracted from research papers, and detailed information about the TTM model and characteristics of users in each phase10,54, contained a total of 215,553 tokens. This dataset was used to fine-tune MHC-Coach, enabling it to generate contextually relevant and personalized messages for behavior change in physical activity (see Fig. 1). By integrating expert-designed messages with research-based content, we aimed to ensure accurate health information aligned with clinical and behavioral science guidelines, while grounding the language in evidence-based psychology tailored to each TTM stage.

Language model

LLaMA 3-70B32 was selected as the primary LLM architecture in this study due to its strong instruction-following abilities, open-source weights and model architecture, and a favorable balance between model size and performance for mobile health deployment. It has also demonstrated competitive performance on reasoning and language understanding tasks32, along with recent findings showing its responses to health-related questions matched or exceeded those of human coaches in quality, empathy, and accuracy, further supporting its suitability for health coaching applications22.

LLaMA 3-70B is a decoder-only transformer mode with >70 billion parameters, optimized for diverse tasks such as reasoning, instruction following, and dialog generation. For our experiments, we utilized LLaMA 3-70B with the following hyperparameters: temperature of 0.8 for response diversity, maximum token length of 800, and top-p (nucleus sampling) set to 0.9.

Fine-tuning

We used an instruction-tuning73 variant to fine-tune LLaMA 3-70B on our dataset. Given the unstructured nature of the research papers and the need to align expert messages with the appropriate stage of change, we developed a custom template to guide the fine-tuning process. We structured the input data using a custom template designed to train the model to generate stage-specific motivational messages. Each training example consisted of three key components: (1) a prompt section, describing the user’s stage of change and requesting a tailored motivational message, (2) a stage section label specifying the user’s stage of change, and (3) a completion section, containing the human-designed motivational message as the target response. To ensure clarity and consistency, a designated token was used to separate messages (see Fig. 1). To provide additional context, we appended unstructured text from manuscripts detailing the impact of regular exercise on cardiovascular health1,10,46,47,48,49,50,51,53,54, along with a detailed explanation of the TTM and the characteristics of individuals at each stage of change10,54.

We used Low-Rank Adaptation74 for fine-tuning, a technique that updates pre-trained models. This approach enables efficient fine-tuning by adding trainable low-rank matrices as additional parameters, while keeping the original model weights frozen. We performed a parameter search over learning rates (5e-5, 1e-5), LoRA rank (16, 32) with corresponding LoRA alpha values set to twice the rank (32, 64), and weight decay (0, 0.01), testing each configuration over one epoch. The final configuration consisted of a learning rate of 1e-5, a LoRA rank of 32 with alpha set to 64, LoRA applied across all linear layers without dropout, a cosine learning rate scheduler, a warmup ratio of 0.03, and a batch size of 8 over 5 epochs. Training was performed on 8×A100 80GB GPUs.

Large-scale user study design and participant recruitment

Ethical approval for the study was obtained from Stanford University’s Research Compliance Office (IRB-75836) and was conducted in accordance with the Declaration of Helsinki. Participants provided informed consent to join the My Heart Counts Cardiovascular Health Study, including agreement to be re-contacted for future research. Participation in the follow-up survey was voluntary.

Participant recruitment and eligibility criteria

The My Heart Counts (MHC) smartphone application was first launched in March 2015 via the Apple App Store for United States, United Kingdom, and Hong Kong residents26,27,28,29,30,31. Individuals aged 18 years or older who could read and understand English were eligible to participate in the My Heart Counts Cardiovascular Health Study.

Survey invitations were sent via email to MHC users who consented to being contacted for future research. Survey responses were collected using the Qualtrics survey platform. Respondents who did not complete the full survey were excluded from the analysis, as complete responses were required for group-level analyses. Of the initial 1,004 respondents, 632 met the inclusion criteria, completed the entire survey, and were retained for the final analyses (see Fig. 3).

Survey design

Throughout the survey, participants were shown pairs of messages, one human expert-designed message and an MHC-Coach-generated message, in a blinded fashion and without knowledge that the study involved the TTM. For each message pair, they made a forced A/B choice, selecting the message they felt was more empathetic, realistic, actionable, and encouraging. The human expert-designed messages used for all subsequent comparisons were sourced from the same crowdsourcing dataset used to fine-tune the model, involving 25 human experts in fitness, behavior, and health psychology33. To match the 20-word length requested in model inputs, expert messages were randomly sampled from the top 50% of messages by word count, improving length comparability while preserving sufficient sample size. All participants were shown the same set of message pairs. This design allowed us to directly evaluate whether the model could reproduce the set of expert-quality messages it was trained on. Examples of both human expert-crafted and MHC-Coach-generated messages that users compared are provided in Supplementary Table 2.

Participants performed two sets of comparisons, one with generally motivating messages and one with messages that were tailored to their stage of change. The first set of questions asked participants to choose their preferred coaching message in an A/B forced-choice set of five questions featuring general motivational messages. These messages were created by a human expert33 or generated by MHC-Coach with the sole objective of encouraging regular physical activity, independent of the stage of change.

Participants were then stratified into one of five stages of change using an evidence-based TTM stage-identification question, which assessed the duration and consistency of their regular exercise habits75. This question, illustrated in Fig. 4, defined regular exercise as planned physical activities (e.g., brisk walking, jogging, swimming) performed 3–5 times per week for 20–60 min to improve fitness. Regular exercise was defined as planned physical activities—such as brisk walking, aerobics, jogging, bicycling, swimming, or rowing—performed 3 to 5 times per week for 20–60 min per session to improve physical fitness75. After categorization, participants answered five questions comparing messages tailored to their specific stage of change. In this section, both the MHC-Coach-generated and human expert messages were stage-aligned: expert messages were selected from the dataset based on the stage-specific scenarios used during data collection33, while MHC-Coach was prompted with the corresponding stage label and descriptive context (see Fig. 1).

Statistical analysis

The Chi-squared test was used to statistically evaluate differences in participants’ preferences for human expert-designed versus MHC-Coach-generated messages, under the null hypothesis that preferences for both types of messages were equal. Analyses were conducted across the five specific stages of change (pre-contemplation, contemplation, preparation, action, and maintenance) and for generic motivational messages. To account for the six comparisons performed (five stage-specific tests and one generic message test), a Bonferroni correction was applied to control the family-wise error rate, adjusting the significance threshold from α = 0.05 to αadjusted = 0.0083 (0.05/6). Participants were classified as preferring MHC-Coach if they selected a majority of MHC-Coach-generated messages, and as preferring the human expert if they selected a majority of human expert-generated messages in A/B forced-choice questions in analyses of the general motivational and TTM stage-specific question sets. To test the robustness of our findings, we conducted sensitivity analyses stratified by gender (male, female) and age group (above and below 50 years), using chi-squared tests with Cramér’s V76 to estimate effect sizes. All P-values were two-sided and Holm-adjusted for multiple comparisons. Finally, a post hoc power analysis was performed using the observed effect size and sample size.

Expert evaluation of coaching messages

We engaged two expert reviewers with active research in applying the TTM to independently evaluate and rate both the fine-tuned MHC-Coach-generated messages and the expert-written messages (selection procedure described in Methods Section 2) in a blinded fashion33. The evaluators were blinded as to whether a given message was MHC-Coach- or expert-generated. They assessed each message on a 5-point Likert scale across two criteria: (1) the perceived effectiveness of the message in promoting increased physical activity and (2) the degree to which the message aligned with the recipient’s current stage of change.

Linguistic and stylistic features across generation methods

To compare generation strategies suitable for proactive, single-turn coaching, we created a set of twenty messages (four for each of the five TTM stages) using five techniques: base LLaMA 3-70B model, MHC-Coach, few-shot prompting73, retrieval-augmented generation (RAG)44, and human experts.

Pretrained baseline

The unmodified LLaMA 3-70B-chat model was prompted with a brief TTM stage description and a request to generate a 20-word motivational message.

Few-shot prompting

We first inserted a brief system instruction: “You are a health coach trained in the Transtheoretical Model of Behavior Change.” This was followed by the stage-specific task prompt used in the baseline condition and then three demonstration messages matched to that stage. These exemplars were drawn from the expert corpus33 but were withheld from the subset of expert messages used for quantitative comparisons. This single concatenated prompt was provided to the unmodified LLaMA 3-70B model for each stage.

Retrieval-augmented generation

We embedded the full fine-tuning corpus, consisting of 3268 stage-labeled expert messages from the crowdsourcing study33 and the exercise-science excerpts as outlined earlier in the data portion of the Methods, using the BAAI/bge-small-en-v1.5 encoder and indexed it with Llama-Index (version 0.10.38). At inference time, the index returned the two 1000-token segments with the highest cosine similarity to the stage-specific query. These retrieved segments were prepended to the 20-word prompt used for the baseline condition, and the full context was then passed to the unmodified LLaMA-3-70B model for generation.

Expert messages

Twenty expert-written messages were randomly sampled from the crowdsourced corpus33 (selection procedure described in Methods Section 2).

Prompts used for each message generation strategy are provided in Supplementary Table 1.

Corpus and preprocessing

We performed a systematic comparison of the linguistic and stylistic properties of the messages produced in each generation technique, as described in the data portion of the Methods. Each of the five generation methods produced 20 messages, yielding a corpus of 100 messages.

All analyses were performed in Python 3.1. Linguistic annotation, including part-of-speech tagging and dependency parsing, was performed with spaCy (version 3.7.2)77. Sentiment analysis used VADER (version 0.3.3)78, and readability scores were computed using Textstat (version 0.7.3)79.

Feature definition and extraction

The selected features reflect complementary dimensions of message quality, including length, lexical richness, emotional tone, behavioral focus, temporal specificity, and readability. Each feature is defined below, along with the method used for its extraction.

Word count

Calculates the length of each message, using spaCy (version 3.7.2, en_core_web_sm model) by counting alphabetic tokens only, excluding numerals and punctuation.

Compound sentiment score

Assesses the overall emotional tone of a message, using the VADER sentiment analysis tool (version 0.3.3). VADER outputs a composite polarity score ranging from –1 (strongly negative) to +1 (strongly positive).

Action-verb frequency

Measures the number of syntactically central actions in a message. Action verbs were dentified using spaCy by selecting tokens with part-of-speech tag VERB, and excluding those with auxiliary or copular dependency roles. This excludes verbs serving grammatical support functions (e.g., is, have), focusing on core predicates expressing meaningful actions.

Temporal reference

Indicates whether the message is temporally anchored. This binary feature was assigned a value of 1 if spaCy recognized a DATE or TIME entity, or if the text included predefined temporal keywords (e.g., today, tomorrow, routine). Messages without such markers were assigned a value of 0.

Type–token ratio

Measures lexical diversity, calculated as the ratio of unique lowercase alphabetic tokens to the total number of such tokens in the message. Tokenization was performed using spaCy.

Exclamation count

Captures emphatic punctuation usage, quantified by the number of exclamation marks in each message.

Flesch Reading-Ease Xscore

Estimates message readability using the Textstat 0.7.3 library, where higher scores correspond to simpler, more accessible text80.

For descriptive comparisons across generation methods, each of the seven features was averaged across the 20 messages produced by a given technique.

Statistical comparison across methods

To complement the descriptive comparisons, we conducted a statistical test to assess whether feature distributions differed significantly across generation methods. For each feature, we first tested the normality of per-message values within each method using the Shapiro–Wilk test81. When distributions met the assumption of normality, we applied one-way ANOVA; otherwise, we used the non-parametric Kruskal–Wallis test. All tests examined the main effect of the generation method on individual feature distributions. All statistical analyses were conducted in Python using the SciPy library (version 1.13)82.

link